For me the big change is not necessarily the increases in CPU and Memory capabilities – I think for many customers these became beyond production values sometime ago – the big change comes with the end of the 2TB limit on the virtual disks or VMDK size upto 62TB.Ī brand new VM Version 10 VM supports these capabilities nativelyĭuring the creation of the VM you can expand the New Hard Disk option and change the increment to TB, a set the size you want.

So much so I think we going to have invent a new label to replace the term monster. VMware was first to really introduce the “monster VM” – and the monster in the datacenter just keeps on growing.

#WINDOWS 2012 VIRTUAL MACHINE INITIALIZE DRIVE MBR OR GPT WINDOWS#

These challenges go somewhere to explain why Windows 2012 HyperV has an add-disk, and copy from old-disk to new-disk process which takes time based on the volume of data, and speed of storage – and also requires the VM to power off….So you mileage will ultimately vary based on the limits/features of the file system/Guest Operating System

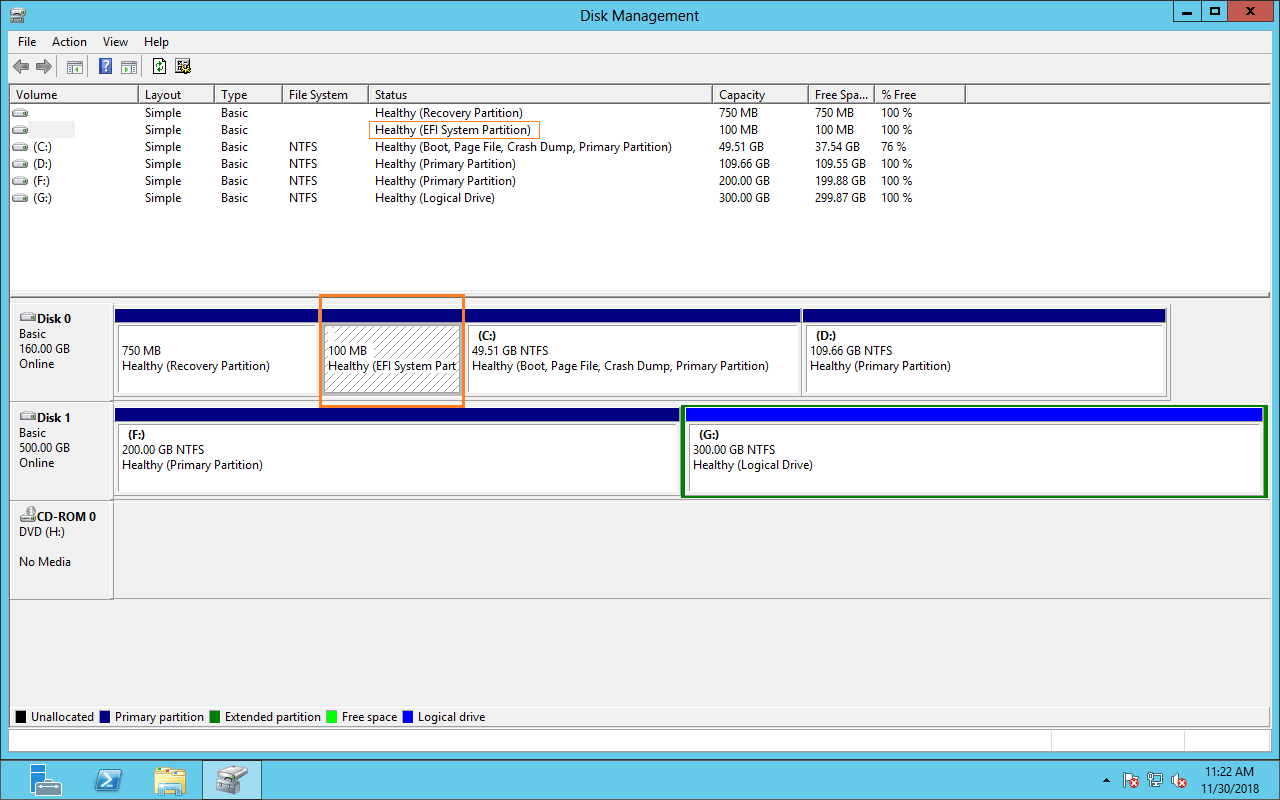

NTFS defaults to 4K “allocation unit” size by default on smaller partitions, but to access a 62TB partition you need a “allocation unit” size of 16K or higher. Even once the VMDK in VMware, and the DISK in Windows is 62TB, you may still face issue of “allocation unit” or small “cluster” size within the NTFS PARTITION.Currently, Windows 2008/2012 have no native tools to do this, however 3rd party tools do exist. Disks with MBR need “converting” to GPT to go beyond the 2TB boundary.However, you can make the virtual disks bigger without an special conversion process that involves a length file copy process – unlike certain vendor up in Seattle ? To take 2TB range you do have to currently power off the VM.VMware vSphere5.5 supports 62TB disks – it’s not 64TB because we reserve 2TB of disks space for snapshots etc.